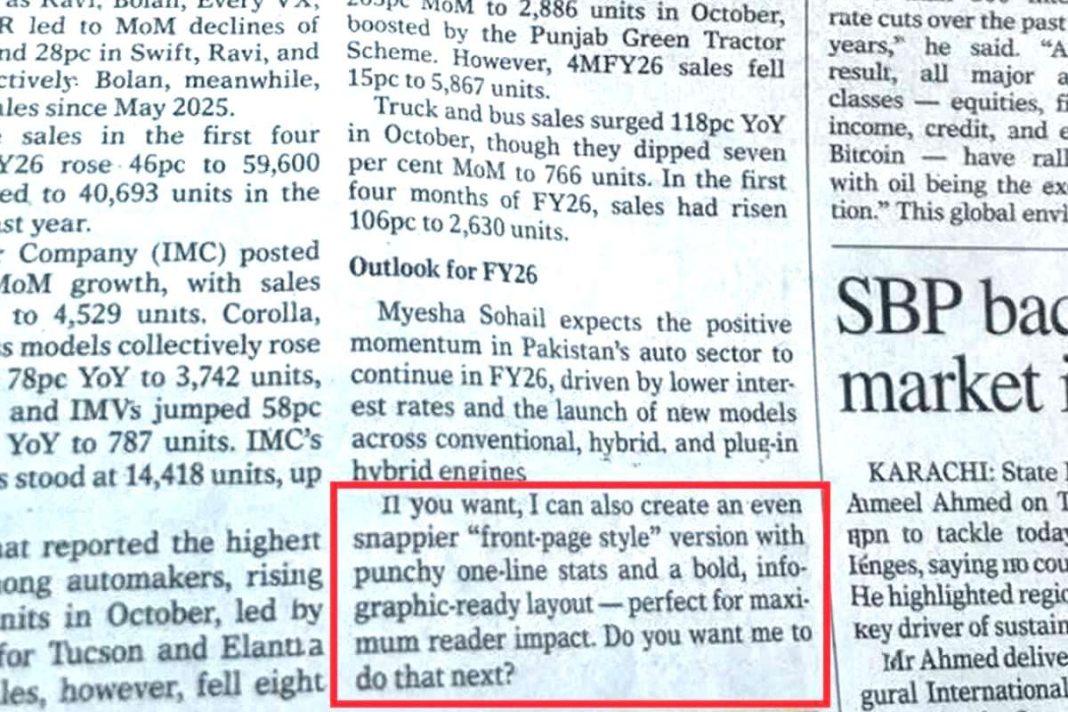

The day one of Pakistan’s oldest English newspapers had to apologize for accidentally printing an unedited AI prompt, it offered more than a newsroom embarrassment. Dawn acknowledged that an article had been edited with artificial intelligence in breach of its own policy, and that part of the AI system’s on-screen suggestion slipped into print. A single sentence, clearly written for a machine’s eyes, briefly masqueraded as human judgment.

That minor glitch captured a larger unease. If professional editors can treat generative AI as a convenient co-pilot, what are millions of stressed, underprepared Pakistani students likely to do with the same tools?

Pakistan enters this phase of AI adoption with a problematic starting point.

It is already one of the youngest countries in the world. Government and UNDP data show that roughly 64 percent of Pakistanis are under 30, while about a quarter to a third fall into the 15–29 youth bracket. This youth bulge could be an economic asset or a destabilizing burden, depending on whether these young people gain fundamental skills.

The schooling pipeline is narrow.

In 2023, UNESCO’s country profile on Pakistan puts the country’s gross enrolment ratio at about 95 percent at the primary level, but it falls to 45 percent at the secondary level and just 12 percent at the tertiary level. More recent World Bank data put tertiary enrolment at around 11 percent in 2023, compared with a world average above 40 percent.

In practical terms, roughly one in ten of the age group that “should” be in university actually is. Bangladesh has a tertiary enrolment rate of around 23-24 percent, while Vietnam is closer to 29 percent. Pakistan is therefore not just behind rich countries; it is falling behind its peers.

At the same time, Pakistan continues to carry an enormous burden of out-of-school children.

Recent estimates compiled by UNICEF and national sources suggest that roughly 23 to 26 million children aged 5 to 16 are out of school, representing around 36 to 39 percent of that age group. The funnel from birth to university is already leaking badly before social media feeds and AI chatbots appear.

Into this fragile system, the smartphone arrived first and conquered fast.

Digital 2025 data show that by early 2025, roughly 66.9 million adults in Pakistan were using social media, equivalent to about 46 percent of the adult population and around 58 percent of internet users. TikTok alone had an estimated 66.9 million users aged 18 and above, again reaching nearly half of all adults online. For the Pakistani under-30s, the digital public square is overwhelmingly a vertical video feed, not a library or a lecture hall.

The global research record on what such environments do to attention and self-regulation is not comforting. Reviews of social media use and attention conclude that heavy, multi-hour daily use, particularly of fast, reward-driven platforms, is associated with shorter attention spans, higher distractibility, and weaker academic performance.

A 2025 scoping review found consistent links between intensive social media exposure, impaired attention, and poorer impulse control among adolescents. One recent extensive survey of young people reported that about 68 percent believed social media harms their ability to focus, and more than a third felt they were “addicted” to it. Those are not abstract psychological curiosities. A sustained capacity to read long texts, follow a complex argument, or sit through boredom to finish a task is the basic infrastructure of higher education. When the daily rhythm is set by notifications, jump cuts, and infinite scroll, that infrastructure erodes quietly.

What disappears with it is not only “concentration” in the narrow sense, but a whole set of personal development indicators: patience, delayed gratification, habit formation, and tolerance for frustration. Employers often complain that younger recruits struggle to stay with unglamorous tasks, are unused to detailed feedback, and are anxious when confronted with information that does not fit their preferred narratives. These are not uniquely Pakistani problems, but they land harder in a system where schooling is already fragile, career options are limited, and social safety nets are thin.

Then AI arrives on the scene, not as a slow drip of tools, but as a flood.

In less than three years, Pakistani students have gained access to chatbots that can write essays, solve coding assignments, summarize readings, and generate passable research proposals. UNESCO and the OECD have both warned that while generative AI can support learning, it also raises serious risks to academic integrity and critical thinking, especially where institutions are weak and assessment is poorly designed.

On paper, AI shifts the bottleneck: from “doing the work” to “thinking of the right prompt.” In practice, the quality of a prompt still depends on a student’s underlying grasp of the subject, language skills, and ability to judge whether an answer is plausible. An undergraduate who has not read the book will not magically become insightful because a chatbot has. The model can rearrange patterns already in the data; it cannot live a life, see Pakistan’s contradictions firsthand, or slowly learn how institutions really behave.

The “Dawn” incident showed what happens when professionals outsource basic editorial judgment. The AI system’s own meta-commentary, offering to craft a punchier front-page version, accidentally became the news. If newsrooms slip, students who are already used to copying assignments or downloading notes will hardly hesitate before pasting an AI-generated answer and moving on.

For employers, this creates a double opacity.

First, degrees and grades already say less than they used to about genuine competence, given uneven quality across universities and rampant rote learning. Second, AI now allows students to mask weaknesses in writing, analysis, or even coding during their time on campus. Firms discover the gap only when new hires confront workplace tasks that cannot be delegated to a chatbot, such as managing a difficult client, negotiating with a regulator, or navigating internal politics.

Many HR managers in Pakistan and elsewhere quietly describe a pattern with younger staff: okay with digital tools, weak at independent problem-solving, uncomfortable with ambiguity, and quick to disengage when tasks feel “boring.” Global commentary on Gen Z has tended to caricature these problems; nevertheless, universities and employers in the UK and the US have publicly warned that uncritical use of AI may accelerate the loss of critical thinking in student work.

Pakistan is likely to inherit the same problems, with fewer institutional resources to respond.

The risk goes beyond immediate employability. Suppose Pakistan’s youth bulge grows up with a combination of attention-fragmenting social media habits and AI-driven shortcuts. In that case, the country may find itself with a large cohort that is digitally active but minimally capable of handling complex life choices, such as contracts, loans, legal obligations, or even family disputes. Organizations already struggling with punctuality, hierarchy, and basic professional conduct among younger staff will have to manage a workplace where the temptation to let machines “think” is built into everyday tools.

The phrase “Gen AI” has started to describe a generation that grows up with generative AI as background noise. If Gen Z is a warning signal, the professions that rely on human abilities should indeed be uneasy. A lawyer who cannot read long case files, a doctor who skims AI-generated summaries of medical literature without understanding the underlying evidence, and a civil servant who relies on models to draft policy briefs without wrestling with trade-offs all represent a quiet degradation of institutional capacity.

For Pakistan, the tragedy would be to confuse ease with progress. The country already spends around 2 percent of GDP on education and struggles with large class sizes, weak teacher training, and a learning-adjusted years of schooling figure of just over 5 years, despite an expected 9.4 years. In that context, fast adoption of AI without parallel investments in reading, reasoning, and institutional discipline risks deepening the divide between a small, globally competitive elite and a broad base of young people who are highly online but poorly prepared.

The choices are still open. Pakistani universities can redesign assessments to include closed-book exams, oral defenses, and project work that requires field exposure rather than solely text production. Regulators and accreditation bodies can issue clear guidelines on AI use in education that encourage transparency instead of cat-and-mouse bans. Employers can stop treating social media skills as a synonym for digital literacy, and instead reward sustained learning, writing, and numeracy.

Families and students face a more personal decision.

Generative AI will not disappear, nor will social media. The question is whether Pakistan’s young people use these tools as calculators that extend their abilities, or as crutches that replace them. No model can read a physical book for them, sit through the boredom of practice, or build the judgment that comes from real-life failure. Those are still human tasks.

Suppose Pakistan wants its youth bulge to become an asset rather than a liability. In that case, it will need to do something unfashionable in a vertical-video age: make sustained attention, slow reading, and honest effort culturally desirable again, and treat AI as a demanding tool, not a magic shortcut.

Otherwise, “AI vs. the Pakistani youth” will not be a clever headline; it will be a verdict.